March quick-takes

Recap of my short posts on LinkedIn in March

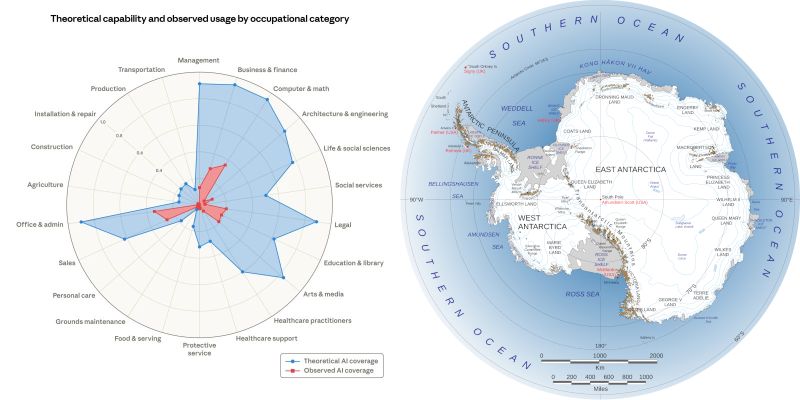

Antarctica in the AI Chart

You know what I see when I look at this chart, being shared by everyone in the past few days? I see Antarctica. 😅

We can talk AI capabilities all day, I can't unsee it. Human pattern-matching at its finest!

AI-Assisted Development Talk at Python Zagreb

Yesterday I talked about vibe coding and responsible AI-assisted development to a packed room at Python Zagreb meetup, one of my favourite venues.

I covered recent LLM advanced, different approaches to coding with AI (YOLO vibe coding vs actually caring about the resulting code), best practices, team dynamics and future outlook. I also did a little bit of demoing – an AI agent was autonomously coding a Meetup platform clone while I was presenting.

We had great Q/A and discussion afterwards! This is a hot (and hyped up) topic, and I'm heartened to see people taking code quality, maintenance and overall “good engineering” practices seriously. There's no fate but what we make for ourselves.

The talk was an updated version of the one I gave a few months ago at a few venues. “Updated” is selling it short: virtually all the slides, and most of the content, had to change. There's been an enormous amount of progress in the past few months! I expect I'll have to update it again before too long :)

The prompts, resulting code, and presentation slides are all available in github.com:senko/python-zg-vibe-speeka Git repository.

Thank you Python Zagreb for having me!

Non-Techies “Accidentally” Building Apps with AI

Yesterday a friend told me his wife and he (both non-techies) “accidentally” coded an internal web app for her work. They wrote the requirements and pasted them to ChatGPT to see if it could do a mockup. It built an entire app.

This was ChatGPT, not even an app builder like Lovable or Replit, and neither of them has any software development knowledge. They did, however, stumble on the most important bit – clearly specify what you want built.

Of course they already knew AI can do stuff like that (one reason being I bore him to death with it each time we grab a cup of coffee :), but were still blown away by seeing it in action.

Stipe's experience is another example of what the future holds. Forget AI building the next $1T startup: we're entering an era of bespoke single-user software – for fun, for solving niche problems.

Zvonimir Sabljić's idea of “citizen developers” sounded slightly insane back in 2023. A few years later, we can see examples everywhere around us.

1M Context Now Generally Available for Claude

Anthropic has made the 1M context window for Claude Opus/Sonnet 4.6 generally available in Claude Code, Claude.ai, and removed the price premium (ie. no extra charge for large context usages): announcement

For coding, this is huge. On a recent project involving a few thousand lines of C code, I was constantly hitting the context – the language was just too verbose and the problem had too many important details to comfortably fit under 200k.

I've been playing with this for a few days and it doesn't seem different from before, except I don't have to keep an eye on context usage (aside: they added a tiny tweak to Claude Code's “clear context and implement plan” where they show how much context is in use – very helpful when you're trying to determine whether to clear or not).

It remains to be seen whether the context is good in practice. Benchmark say recall is high (78% on 1M tokens), but practice often differs. But I am hoping this also improved the recall on smaller context sizes (200-400k), which is a huge win in of itself.

Another goodie (especially for folks in Europe, Africa and Asia timezones), Anthropic temporarily raises the US “off peak” limits 2x:

- 2x usage on weekdays outside 5–11am PT / 12–6pm GMT / 1pm-7pm CET

- 2x usage all day on weekends

This is likely an experiment to see if they can “bribe” people to offload usage to off-peak hours (similar to how many electricity companies operate). This can make Claude Pro ($20/mo) more usable, esp. if we start to (ab)use the 1M context windows. It'll be interesting to see if the “temporary 2 weeks” ends up permanent.

ChatGPT's Click-Baity Answers

I've been seeng more and more “click-baity” answers from ChatGPT (Pro). The bot answers, but concludes with (verbatim phrasing, details omitted):

If you want, I can also point out the one mistake that causes these [...] If you want, I can also show one trick used in studios for [...] If you want, I can also show one placement trick that makes [...]

Most assistants can't shut up and ask if you'd like to followup, but I haven't previously seen this click-baity “this one trick/mistake/secret” phrasing.

I asked around and apparently this is a common ocurrence (at least on free ChatGPT plans), so people already mentally ignore it, but I only noticed it a few days ago. I'm not sure if that's just me not paying attention previously, or was able to somehow avoid it so far.

There's no chance this is a coincidence, as it happens too often – but only in specific type of questions (this one was about home improvement / DIY). Feels very much like the LLM has already been aligned to lead with an in-content ad, but there's no ad inventory yet so the followup answers (to my “yes, please”) are just vanilla.

This could also be market research by OpenAI – gathering data for which kinds of queries people are interested in getting the “one trick” info, that will be amenable for ads.

Below-the-Radar LLM Updates

A bunch of below-the-radar LLM updates this week: OpenAI releases GPT-5.4 mini and nano, while small open model scene heats up with Mistral Small 4 and Nemotron 3 Super. Oh, and there's a new Mamba (v3)!

Good week so far for the fans of small and/or open models – and it's only Wednesday! The models are so varied there's no point comparing them head to head, so I'll just list the highlights.

Let's start with OpenAI. If you're using their smaller models, upgrading to GPT-5.4 Mini or Nano is a no-brainer. They're still noticably smaller / less capable models, so don't try to replace full GPT-5 or even 4.1 with 5.4-Mini. But for simple, straightforward task, it's a clear upgrade.

Mistral's new Small 4 model has 119B total params (6B active) with 256k context window and configurable reasoning effort, released under Apache 2.0 license.

Nemotron 3 Super is NVidia's 120B total (12B active) hybrid transformers/mamba model. Notably, it's hybrid transformers/mamba architecture, and was pretrained in 4-bit precition (NVFP4). Nemotron is also released under an open license and provides (some?) pretraining and post-training data.

With previous open models targeting ~120B sizes (GPT-OSS 120b and Qwen3.5), this size might become a sweet spot for capable models that can be still run on consumer hardware (eg. Mac Studio) or cheaper cards (“cheaper” here is relative to the top NVidia models – still quite pricey!)

Speaking of Mamba (a different architecture for LLMs), the team behind it just released Mamba 3! They also provide an AI model, but the main thing here is update to the architecture, and is mainly of interest to LLM researchers.

Quite a week so far! With a mysterious new “Hunter Alpha” model appearing in OpenRouter, expected imminent DeepSeek V4, and an unconfirmed MiniMax 2.7 model (again on OpenRouter), all 1T-sized, expect more exiting news for AI geeks soon. (edit: turns out it's Xiaomi MiMo-V2-Pro, see comments)

(it is a bit tiring to keep track of it all, I can tell you that...)

AI Research Papers and the Fast-Moving Industry

The trouble with research papers measuring impact, effects, or quality of LLM-based systems is that the industry moves way too fast – by the time the results are published, they're of interest only to historians.

Today I read two relatively fresh research papers (both from 2026):

- Survey on LLMs for Spreadsheet Intelligence — how well LLMs operate on spreadsheets)

- Speed at the Cost of Quality — long-term impact of using Cursor in open source projects

Both very interesting papers, but: The Spreadsheet Intelligence cites GPT-4o, while the Cursor one uses data from August 2025. The frontier has moved so much since that any conclusions potentially greatly differ from the current state.

Since people usually repost the juicy bits without these (or other) caveats, any information quickly turns into misinformation. When presented with “a research has shown” post about AI, whatever the conclusions, check the date.

I feel for these researchers. They're doing important (and, let's be frank, tedious) work of surveying the current state of things. It's just that the current state is moving so fast.

The bespoke software revolution?

With all due respect to what Jason Fried and the crew from 37Signals/Basecamp have achieved, this take is wrong.

Bespoke sofware does exist. And yes, consultants small and large have built, deployed, and charged through the roof for bespoke software. And often it sucks. Here's why it sucks: because clients can't coherently describe what they need, don't have a budget, consultancies don't care and – critically – the person writing the spec (and controling the budget) isn't the same person that will use it. (here you also have “A Tragedy of EdTech” in one sentence, but that's a different post)

But there's another kind of bespoke software, which, for a lack of a better name, I'll unimaginatively call “internal tool”. This is what VB6/Access/VBA/HyperCard enabled back in the day, what Retool tried to own recently, and what many Excel spreadsheets are secretly doing.

This is duct-taped-code-pasta that barely holds but does exactly what the business needs, and nothing more. I've seen and heard of many cases already of non-techies doing exactly that. It's not scalable, it's not maintainable, it doesn't follow best practices, it doesn't have tests or docs, but it doesn't matter, because it works and solves a biz problem.

The reason it works is that the person can iteratively narrow down to what they need, feedback is instant, iteration is minutes not days or weeks and is super cheap (compared to external developers).

No sane freelancer or agency would ship something like it – for many reasons: as a software engineer you want to ship quality product and charge appropriate amount of money. Many times, that's the right thing for the customers.

Often, it's overkill, and these types of smaller “quick win” projects never get started in the first place. And there's loads of potential projects like these!

So yeah, nobody will vibe-code a payroll system for 100+ person company, nor should they. But people absolutely will, and already do, whip up something that solves their niche problem. Now maybe they'll use AI instead of Excel.

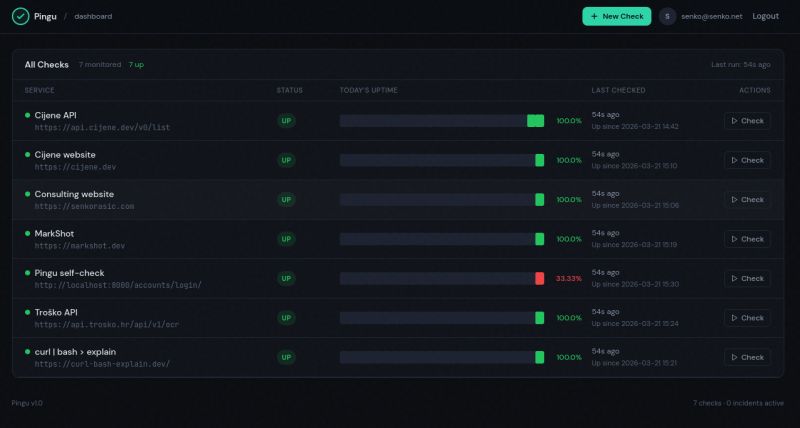

Is AI Killing SaaS?

Is AI killing SaaS? No, but it raises the bar. There are many instances where people previously had to reach out for SaaS solution, and now don't have to.

Case in point: a simple website status checker with alerts. Nothing fancy, but there is some amount of work involved, and previously it would have been easier to use a SaaS. Probably a free tier to begin with, then upgrade to a higher plan once I have a few of URLs to monitor, want higher frequency or some other premium feature.

Now I built it in between helping my kid with some school work and cooking lunch: Pingu

What about reliability, maintenance, etc?

1) I don't need HA on this one. This is on a separate service from the other hosts and I highly doubt they'll all go down at the same time. If Pingu goes down, I don't care (also I can easily set up another instance of Pingu that only monitors Pingu, and the two can monitor each other if I so choose)

2) Maintenance: I don't expect any ongoing work on this. It's going to sit in its corner and do its thing, and perhaps once in never I'm going to update it or add a small tweak. Codebase this size is easy for a modern AI to handle.

3) I haven't looked at the code at all, but I did ask another AI to perform a security check :)

4) It's only deployed internally (no outside access), as it's not designed for wide use – however, it's open source!

5) The full spec it was built from is also open source, and I'll attach the initial prompt it all started from in the comments, since this post is already large enough.

LiteLLM Supply Chain Attack

Massive supply chain attack hit LiteLLM AI Gateway, a very popular LLM wrapper/library/proxy package for Python. The attackers gained control using the trojan version of Trivy security scanner LiteLLM uses, then made malicious LiteLLM releases that steal keys and sensitive info from users who install it.

Detailed incident timeline, Hacker News discussion thread (includes the package maintainers).

I use litellm in my code and my “think-llm” Python package depends on it. Thankfully I haven't updated any of the projects in the past 24 hours (or installed it in new ones), but this is yet another example of how we need to seriously rethink the software supply chain infrastructure we all rely on.

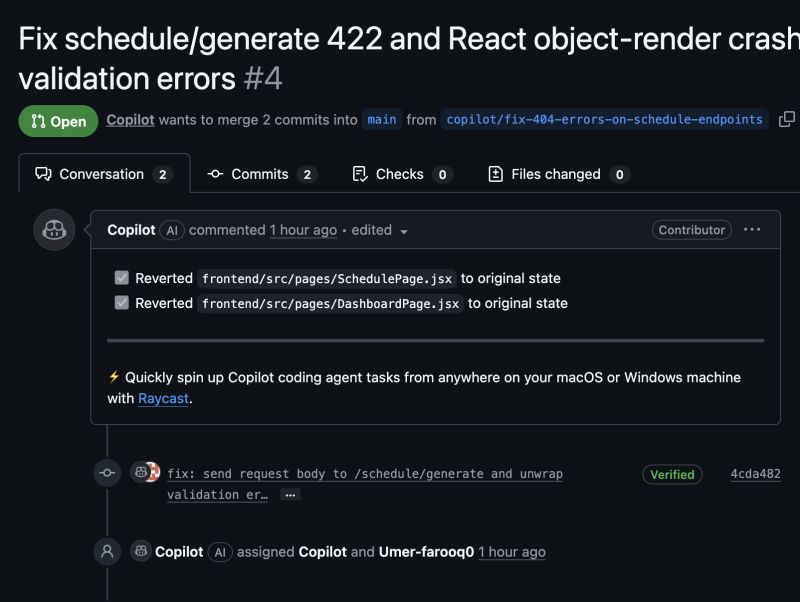

GitHub Copilot's Worrying Trajectory

GitHub Copilot was one of the first – if not THE first – really good AI coding tools. They seem to have dropped the ball since, and their latest announcements and behaviour make it worse.

A week ago they announced they'll start training on our code by default from April 24th. To disable, you have to opt-out explicitly at Settings → Copilot → Features. If you host any code on GitHub, make sure you check that setting!

Now, it also emerged that they're injecting ads into auto-generated pull-requests (they call it “tips”, but let's call a spade a spade). This is a pretty serious breach of customer trust – and to make matters worse, it wasn't even announced anywhere.

Their recent lack of stability and outages could be chalked off to massive spike in use as massive numbers of people vibe-code and it all ends up on GitHub. Taken together with the questionable tactics mentioned above, it presents a worrying trend.

Combine this with a massive flood of poor-quality PRs and Issues that many popular open source repositories are now getting (because people are using AI to game “stars” and “activity” stats on GitHub), and you've got a reasonable argument for hosting your stuff elsewhere.

Is Microsoft finally losing its patience, and strangling the open-source golden goose it bought in '18 for $7.5B?

Zagreb Cursor Hackathon Recap

Last weekend I was honored to be on the judge panel for the first Zagreb Cursor Hackathon, where more than 20 teams built creative projects around “Make something Zagreb wants” idea.

The hackathon was organized by Nico Möhn, supported by Cursor, hosted by Microblink, with friends from Google Developer Group (GDG) Zagreb pitching in with some Google AI credits.

The results were awe inspiring. I've been on many hackathons before and most of them are heavy on the idea, but recognize you can't build much in just a few hours, so teams usually present a proof of concept, interactive mockup, or a presentation.

Not this Saturday. Virtually everyone had a fully working demo – from real-time calculation of sun & shade so you can find your favorite neighbourhood picnic spot, to volunteering platforms, waste management education through gamification and Pokemon Go for stray cats.

There were more than a few apps that'd I'd love to use regularly as a citizen of Zagreb, including the FixZG app (winners of the hackathon), a social way to report problems in your neighbourhood, and KajKam, a waste sorting education app by Ivan Zidov &co. I think Dražen Lučanin would be proud (and should look into some of these entries!)

My co-judges Antonio Hadrović, Vincenzo D'Elia and Domagoj Vuković and I had a really tough job! I wish we could have spent several more hours reviewing the submissions – but the participants probably wouldn't have liked to wait that long :)

Congrats to all the participants and big props to Nico for organizing the event!